Intro

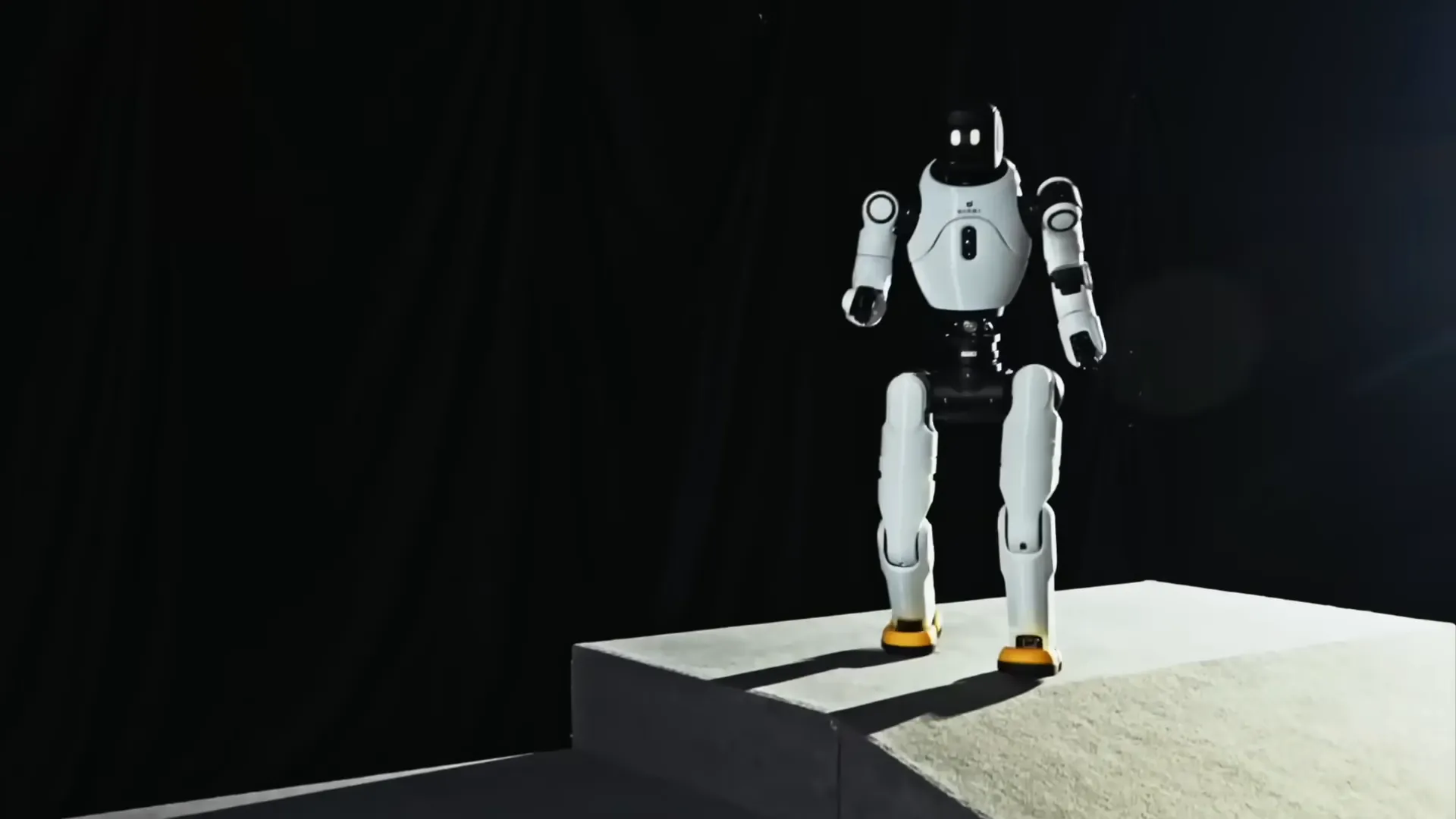

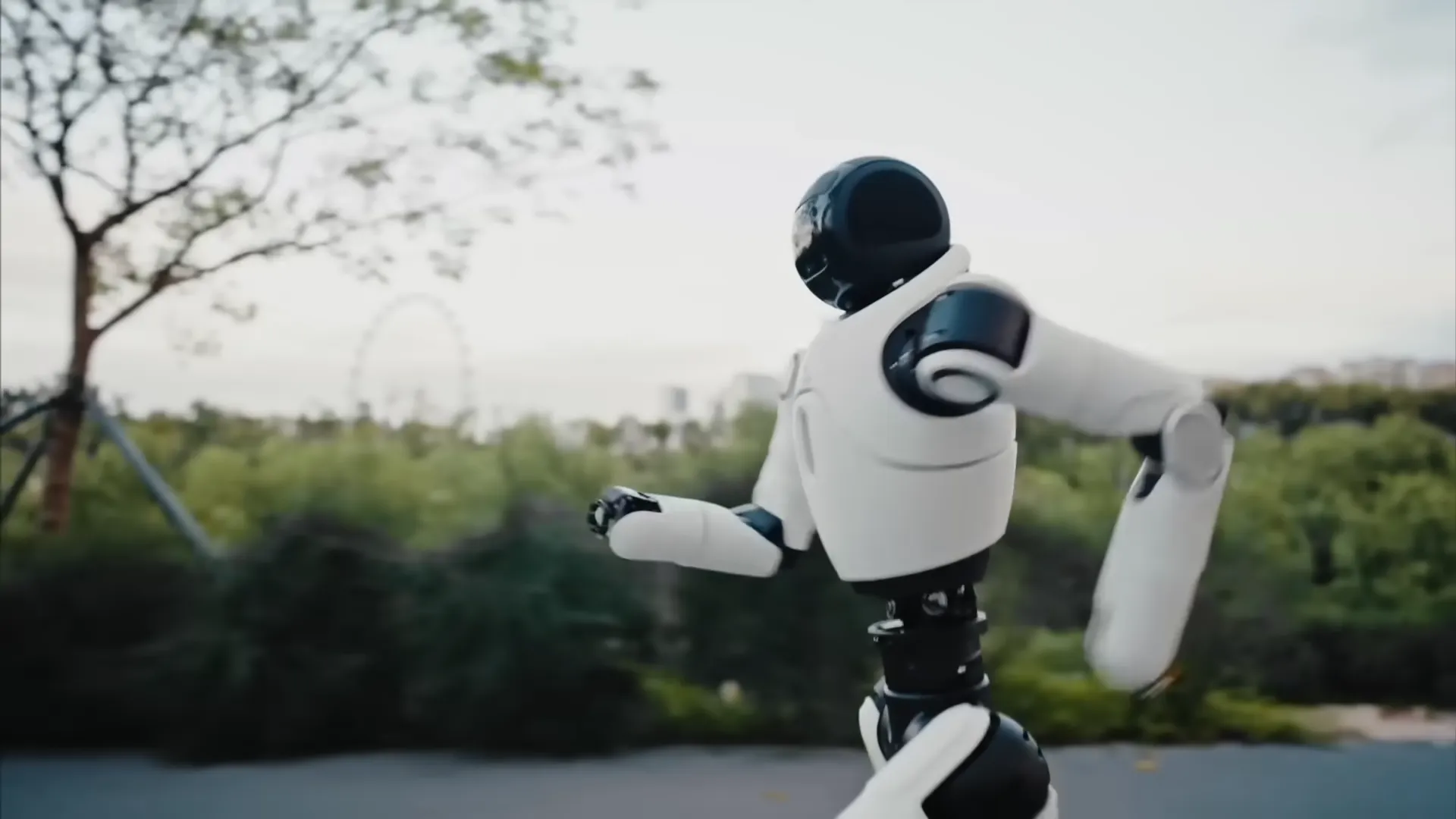

The AgiBot X2 and X2 Ultra share identical physical dimensions of 1,310 mm (H) x 460 mm (W) x 210 mm (L). The base X2 weighs approximately 35 kg while the X2 Ultra is approximately 39 kg. The X2 provides 25 total degrees of freedom distributed as: 5 DoF per arm (10 total), 3 DoF at the waist, and 6 DoF per leg (12 total), with no dedicated neck DoF. The X2 Ultra expands to 30 DoF by adding 7 DoF per arm (14 total) and 1 DoF for neck articulation. Arm reach in both variants extends to 558 mm excluding the end-effector. The maximum payload capacity is 3 kg in specific static postures, with a continuous full-range payload of 1 kg or below, reflecting the arm's design optimisation for interaction rather than sustained load-bearing. All joints are driven by hollow-shaft actuators delivering a peak joint torque of 120 Nm, with the Xyber-Edge cerebellum controller and Xyber-DCU domain controller managing low-latency joint coordination.

Both variants are powered by a 500 Wh swappable battery with a recharge time of approximately 90 minutes or less (rated below 1.5 hours at 54.6 V, 10 A), and support input voltages of 100 to 220 V. The main compute platform for both is two RK3588 processors; the X2 Ultra additionally integrates an NVIDIA Orin NX module at 157 TOPS for AI inference workloads. The base X2's perception system includes an interactive RGB camera and a head-touch sensor. The X2 Ultra significantly expands this with front dual RGB cameras, rear RGB, RGB-D depth camera, multi-line LiDAR for 3D SLAM, and 4G/5G cellular connectivity in addition to the base WiFi and Bluetooth. Both variants include a microphone array, wireless microphone, and speaker for voice interaction, along with an interactive display screen and LED lighting effects. OTA updates and mobile app control are supported on both variants; secondary development access and end-effector compatibility (OmniHand and OmniPicker) are exclusive to the X2 Ultra.